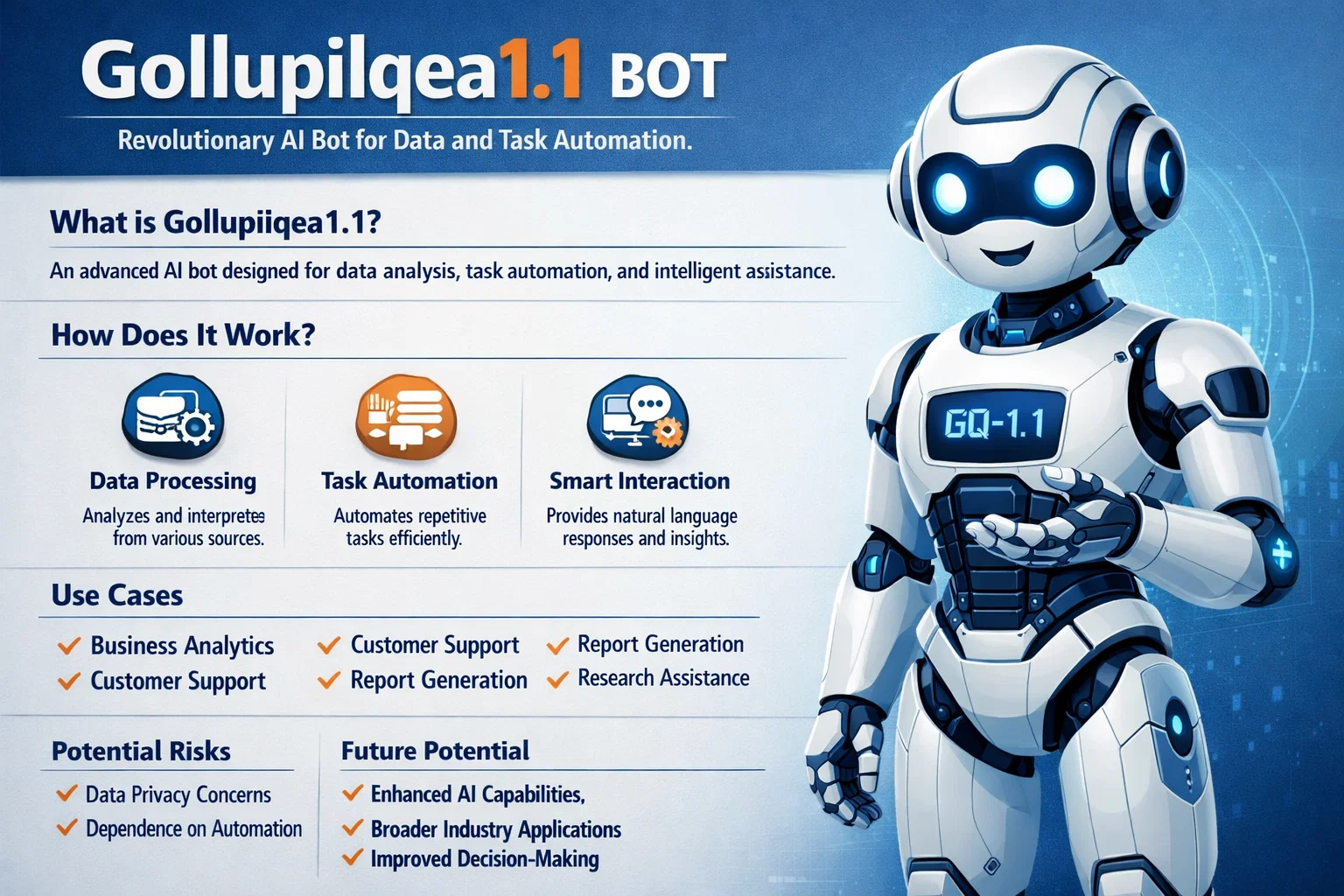

The term gollupilqea1.1 bot has started appearing in online discussions, technical forums, and SEO research spaces, leaving many people curious about what it actually represents and why it matters. At first glance, the name feels cryptic, almost like an internal build label rather than something meant for public attention. Yet that mystery is exactly what has drawn interest from developers, digital marketers, analysts, and even everyday users who encounter unusual bot activity on platforms and websites.

In simple terms, gollupilqea1.1 bot is discussed as a specific automated agent or bot signature associated with structured, rule-based, or semi-intelligent automation. While not everyone agrees on a single definition, the keyword is often connected with crawling behavior, automated interaction patterns, and system testing environments. This article breaks down everything you need to know in plain language, without hype or confusion, so you can understand where this bot fits in the broader automation and SEO ecosystem.

Understanding the Concept Behind Automated Bots

Automation on the internet did not start with advanced artificial intelligence. Long before machine learning became mainstream, bots were already handling repetitive tasks, checking system health, indexing pages, and interacting with APIs. These bots operate based on logic, scripts, and triggers rather than human judgment.

What makes modern bots more interesting is how layered they have become. Instead of simple “if this, then that” logic, newer systems combine data analysis, response timing, and adaptive behavior. Discussions around gollupilqea1.1 bot often place it somewhere in this spectrum, suggesting a versioned system that evolved from earlier automated models.

Another important aspect of bots is intent. Some bots exist to help, such as search engine crawlers or uptime monitors. Others may serve internal testing or analytics purposes. Understanding intent is key before labeling any bot as good or bad, especially when encountering unfamiliar identifiers in logs or reports.

Why Versioning Matters in Bot Identification

The “1.1” in the name is more than cosmetic. Versioning usually signals iteration, improvement, or refinement over a previous release. In software development, version numbers help teams track changes, bug fixes, and feature enhancements. The same logic applies to bots.

When analysts see identifiers like gollupilqea1.1 bot, they often assume there was an earlier version with limitations that needed adjustment. This might involve performance tuning, better compliance with protocols, or improved handling of edge cases. Versioning also helps administrators distinguish between different behaviors coming from similar automated agents.

From an SEO and analytics standpoint, versioned bots can show patterns over time. If behavior changes after a version update, it often indicates intentional refinement rather than random activity.

Common Contexts Where This Bot Is Mentioned

Mentions of gollupilqea1.1 bot typically appear in server logs, traffic analysis tools, or niche technical discussions. Website owners sometimes notice unusual access patterns and look up unfamiliar user agents to determine whether they are harmless or potentially disruptive.

Another context is testing environments. Developers often deploy bots to simulate traffic, test load balancing, or verify API responses. In such cases, unique identifiers are intentionally obscure to avoid confusion with public crawlers. This makes sense when the bot is not meant to index content or interact with user-facing features.

There are also SEO research communities that catalog and analyze bot signatures. Their goal is not fear-mongering but understanding how automation interacts with modern websites, which is essential for performance optimization and security.

How Automated Bots Interact With Websites

At a technical level, bots communicate with websites through HTTP requests, just like browsers do. The difference lies in speed, consistency, and intent. Bots can request hundreds of pages in seconds, follow strict patterns, or target specific endpoints repeatedly.

A bot such as gollupilqea1.1 bot is often discussed as being structured and predictable rather than chaotic. Predictable bots are easier to manage because administrators can identify them, allow them, or block them based on clear rules. This predictability is usually a sign of a controlled system rather than malicious automation.

Understanding this interaction helps website owners avoid overreacting. Not all unusual traffic is dangerous, and not all bots deserve blanket bans.

SEO Implications of Bot Traffic

Search engine optimization is deeply connected with how bots view and access your site. Search engines rely on crawlers to index pages, analyze structure, and evaluate content relevance. However, not every bot contributes to SEO in a positive way.

When traffic from unfamiliar bots appears, SEO professionals first look at behavior. Are pages being requested logically? Is the bot respecting robots.txt? Is it avoiding sensitive areas like admin panels? Discussions around gollupilqea1.1 bot often suggest that it behaves in a controlled and rules-based manner, which is generally a positive sign.

That said, excessive bot traffic can skew analytics, inflate page views, or stress servers. The key is balance and informed decision-making rather than panic.

Related Keywords and Concepts Worth Knowing

To fully understand conversations around this bot, it helps to be familiar with related terminology. These concepts often appear alongside discussions of gollupilqea1.1 bot and provide valuable context.

| Related Term | Simple Explanation |

|---|---|

| User Agent | A string identifying the browser or bot making a request |

| Web Crawler | A bot that systematically browses the web |

| Automation Script | Code designed to perform tasks without manual input |

| Server Logs | Records of requests made to a server |

| Bot Management | Tools and strategies to control automated traffic |

Understanding these terms makes it easier to evaluate whether a bot’s presence is beneficial, neutral, or harmful.

The Difference Between Helpful and Harmful Bots

One of the biggest misconceptions about bots is that they are inherently bad. In reality, the internet could not function at scale without automation. Search engines, monitoring tools, and content delivery networks all rely on bots.

Helpful bots follow rules, identify themselves clearly, and serve a defined purpose. Harmful bots hide their identity, overload systems, or attempt to exploit vulnerabilities. Based on most neutral discussions, gollupilqea1.1 bot is usually grouped closer to the helpful or neutral side, especially when it respects standard protocols.

This distinction matters because blocking all bots can damage SEO, analytics accuracy, and even site stability.

Security Considerations and Best Practices

Even if a bot appears harmless, security teams should always stay vigilant. Monitoring behavior over time is more effective than reacting to a single spike in traffic. Rate limiting, firewall rules, and bot detection tools are standard practices that help maintain control.

For identifiers like gollupilqea1.1 bot, the recommended approach is observation first. Look at request frequency, accessed URLs, and response codes. If the bot behaves responsibly, there is often no reason to intervene.

As one cybersecurity analyst put it:

“The goal is not to eliminate bots, but to understand them well enough that they never become a problem.”

This mindset encourages proactive management rather than reactive blocking.

Use Cases in Development and Testing

Bots are indispensable in development workflows. Automated testing bots check whether updates break existing functionality. Load testing bots simulate traffic surges to see how systems perform under pressure.

In these scenarios, unique identifiers are common. They help teams distinguish test traffic from real users. References to gollupilqea1.1 bot in testing contexts suggest it may serve such internal or controlled roles, rather than public-facing scraping or indexing.

Understanding this use case helps demystify why such bots exist and why their names often look unusual.

Ethical Considerations Around Automation

Ethics in automation is an emerging topic. Just because something can be automated does not mean it should be done without transparency. Ethical bots respect privacy, avoid collecting personal data unnecessarily, and follow published guidelines.

When people discuss gollupilqea1.1 bot responsibly, they often focus on whether it adheres to these principles. Transparency and respect for system limits are key indicators of ethical automation.

This conversation is especially important as automation becomes more powerful and widespread.

How Website Owners Should Respond

If you discover traffic labeled with gollupilqea1.1 bot, the first step is calm analysis. Check documentation, review logs, and compare behavior against known standards. Knee-jerk reactions can cause more harm than good.

Many website owners find that unfamiliar bots have no noticeable negative impact. Others may decide to restrict access if resources are limited. The right response depends on context, goals, and infrastructure.

The important thing is making informed decisions rather than acting out of fear or assumption.

The Role of Transparency in Bot Design

Clear identification benefits everyone. When bots identify themselves accurately, administrators can make better decisions. Obscure naming is not always malicious, but transparency builds trust.

If gollupilqea1.1 bot is part of a controlled system, clear documentation and predictable behavior help reduce confusion. This is a lesson many developers are increasingly taking to heart.

Future Trends in Bot Technology

Automation is not slowing down. Bots are becoming more adaptive, more efficient, and more integrated into daily operations. Future bots will likely combine rule-based logic with learning capabilities, blurring the line between traditional bots and AI agents.

In that landscape, identifiers like gollupilqea1.1 bot may become more common, reflecting iterative development rather than mysterious intent. Understanding them today prepares us for more complex systems tomorrow.

Quotes From Industry Perspectives

“Bots are tools. Like any tool, their value depends on how responsibly they’re used.”

“The presence of a bot doesn’t automatically mean risk; context always matters.”

These perspectives highlight why balanced understanding is essential when evaluating automated agents.

Conclusion

The conversation around gollupilqea1.1 bot reflects a broader reality of the modern internet: automation is everywhere, and not all of it is dangerous or confusing once you understand the basics. By learning how bots work, why versioning exists, and how to analyze behavior calmly, website owners and professionals can make smarter decisions.

Rather than fearing unfamiliar identifiers, the smarter approach is education, observation, and thoughtful management. In doing so, you stay ahead of issues while benefiting from the efficiencies automation brings.

FAQ

What is gollupilqea1.1 bot?

gollupilqea1.1 bot is commonly referenced as a versioned automated agent identifier seen in logs or analytics, often linked to structured and rule-based automation rather than random activity.

Is gollupilqea1.1 bot harmful to websites?

In most discussions, gollupilqea1.1 bot is not considered inherently harmful. Its impact depends on behavior, frequency, and whether it follows standard web protocols.

Why does gollupilqea1.1 bot appear in server logs?

It appears in logs because bots identify themselves through user agent strings when making requests. This helps administrators track and analyze automated traffic.

Should I block gollupilqea1.1 bot?

Blocking should be based on evidence, not assumption. If gollupilqea1.1 bot behaves responsibly and does not strain resources, many site owners choose to allow it.

How can I monitor gollupilqea1.1 bot activity?

You can monitor activity by reviewing server logs, using analytics tools, and observing request patterns over time to understand its behavior and intent.